Introduction

Mainstream journalists pursue facts while under pressure to draw big audiences..

Today, core journalism norms are being challenged, starting with the pursuit of objectivity.

Once upon a time, I was among those who believed that the growth of the Internet could lead to more informed, inclusive, and engaged communities. In this 2013 video, Dori Maynard, the late director of the Maynard Institute, recalls the missed opportunities at the dawn of the Internet age:

Here we had a chance, back then, when we really could have built in structures that ensured that we were as inclusive as possible. Instead, the people driving the conversation told us, 'Don't worry your pretty little head about that. This is all going to be taken care of.' What we ended up doing was replicating the structure of the traditional media...

[On] the issue of race in... technology, ... you heard, 'Oh, no. The Internet is going to solve all this. Race and gender will no longer matter.'"(Maynard)

In his book, Whose Global Village, Ramesh Srinivasan warns against, "the assumption that the technology "naturally" shapes interactions between members of a community and those outside it that are positive for all involved...We cannot simply trust our gateways to the digital world as if they are democratically designed platforms." Instead, we should "consider the intentions behind technology initiatives, and the way they are framed."

Julia Angwin, founder and editor of The Markup helped reveal how seemingly neutral technologies encode and monetize racism as a reporter for Propublica's Machine Bias series.

via GIPHY

What intentions and values guide the push toward computational journalism, solutions journalism and news integrity? What are our unexamined assumptions about the nature of journalistic truth, the value of hierarchical databases and algorithms, and the relationships between news providers and their publics?

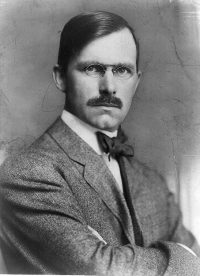

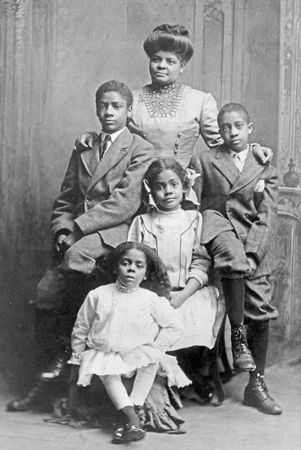

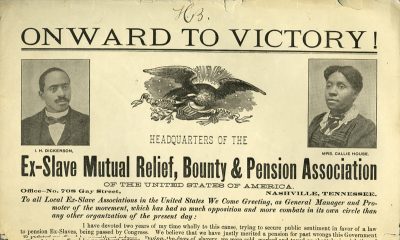

This essay will consider these questions in the context of evolving notions of objective journalism, and in light of emerging scholarship on collaborative technology development. Part one will focus on an exchange of letters between journalist Ray Stannard Baker and sociologist, historian and journalist W.E.B. Du Bois over Baker's coverage of race. Both men cared about facts. Baker's journalism was arguably solutions-oriented, and he sought feedback on his drafts from Du Bois.

Du Bois took issue with aspects of Baker's reporting that he felt reflected fears and stereotypes. He was only partially successful in persuading Stannard Baker to make revisions. In additon, there were important sources both men appear to have ignored - or at least, failed to acknowledge.

Part two will examine the Baker-Du Bois exchange in light of critical scholarship on the concept of objectivity in journalism and the social sciences as it emerged from the mid-19th to the early 20th centuries. In particular, we will consider the work of David Mindich, Khalil Muhammad, and Natalie Byfield.

These scholars have identified ways in which the "just the facts" creed of early 20th-century journalism was limited by ingrained biases of race, class and gender. David Mindich identified how race, class and gender bias shaped the editorial judgment of 1890s-era newspaper editors. Keri Leigh Merrit explains how Southern elites strategized to keep poor white people from making common cause with enslaved Black people, and why that's still relevant. Byfield and Muhammad have argued that these biases would be reflected in "objective" news reporting practices throughout the 20th century, even as newsrooms sought to diversify their staffs.

Part three will explore the requirements for inclusive and culturally competent computational journalism. We'll look at how some scholars and journalists are rethinking objectivity around values of verification, fairness, and understanding the needs of specific audiences and communities. These new ideas respond to Jonathan Stray's 2010 call to design journalism to be used. But it also arises from a growing recognition that journalism practice and education must move away from an "extractive model" to one that is collaborative with and accountable to the communities it aims to serve.

Part four will consider how emerging best practices for collaborating with communities are informing a new approach to computational journalism, exemplified by such organizations as Chicago's City Bureau.